Bad bots account for 37% of all internet traffic, according to the Thales 2025 Bad Bot Report. Businesses worldwide lose an estimated $186 billion annually to bot-driven fraud, scraping, and automated attacks. Those numbers have been climbing every year, and the trajectory isn’t changing.

The harder problem is that bot detection has never been more difficult. Modern bots use residential IP pools to avoid IP-based blocking, machine learning to simulate human behavioral patterns, and CAPTCHA-solving farms to bypass challenge flows. The techniques that worked in 2020 are insufficient on their own in 2026.

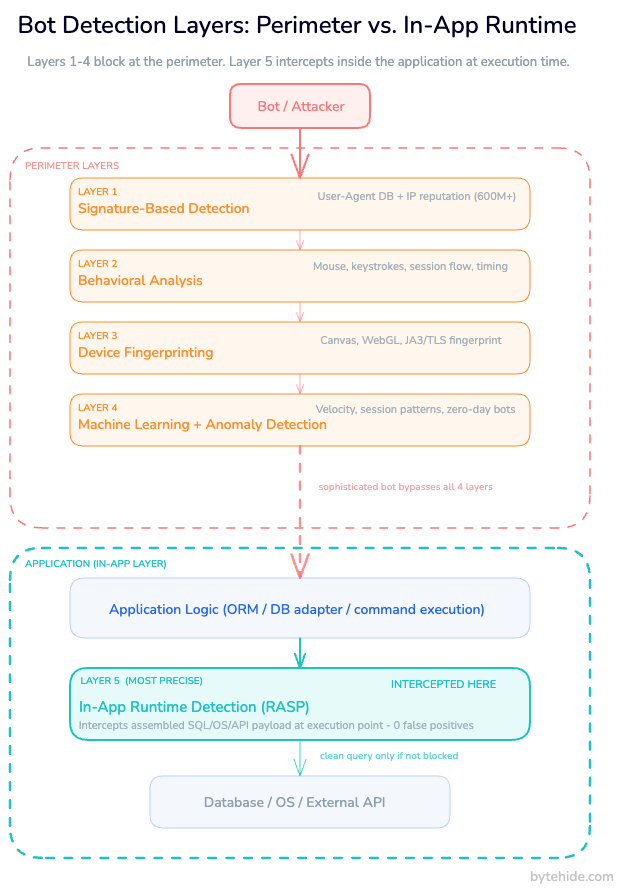

This guide covers the full spectrum of bot detection techniques, organized by layer of sophistication: from basic signature matching to runtime in-app detection. Each layer adds precision. Each layer closes a gap the previous one leaves open. By the end, you’ll have a clear picture of how to implement multi-layer bot detection across web applications, APIs, mobile apps, and desktop software.

What Are Malicious Bots?

Malicious bots are automated programs that interact with web applications, APIs, or software without human intent, executing tasks at a scale and speed no human could match. Not every bot is a threat. Search engine crawlers, monitoring agents, and performance testing tools are all automated, all legitimate. The distinction comes down to intent and authorization.

The most common categories of malicious bot attacks are:

Web scraping. Bots extract content, pricing data, or personal information from websites and APIs. At low volume, this might be a competitive annoyance. At scale, it consumes server resources, violates terms of service, and can expose sensitive data.

Credential stuffing. Bots test stolen username/password combinations against login endpoints. A dataset of 100 million leaked credentials, even with a 0.1% success rate, yields 100,000 compromised accounts.

Account takeover (ATO). An evolution of credential stuffing: once valid credentials are found, bots lock out the original user, exfiltrate stored payment data, or abuse the account for downstream fraud.

Carding attacks. Bots validate stolen credit card numbers by making small purchases, typically on e-commerce or donation endpoints with minimal friction.

Layer 7 DDoS. Rather than volumetric packet floods, these attacks send seemingly legitimate HTTP requests that exhaust application-layer resources: database queries, session creation, file generation.

Inventory hoarding and scalping. Bots monitor stock levels and purchase limited-inventory items faster than human users can respond. Concert tickets, limited-edition products, GPU launches.

API abuse. Automated clients query APIs at rates far exceeding legitimate usage patterns, extracting data or probing for logic vulnerabilities. APIs are particularly exposed because they have no UI layer: no CAPTCHA, no visual challenge, no friction.

Why Classic Bot Detection Fails

The core problem with the most commonly deployed bot detection techniques is that they were designed for unsophisticated bots. Bots have evolved. The defenses largely haven’t.

CAPTCHAs were once the standard gatekeeper. They’re no longer reliable alone. CAPTCHA-solving farms use human workers to solve challenges for fractions of a cent per solve. Machine learning models now solve image-based CAPTCHAs with accuracy exceeding 90% in some categories. Google’s reCAPTCHA v3 moved to behavioral scoring to address this, but determined attackers route traffic through real browsers to generate clean signals anyway.

IP blocking is effective against unsophisticated bots that reuse the same addresses. It’s almost irrelevant against adversarial operators who rent residential proxy networks. These proxies route traffic through real consumer devices, so the request looks like it originates from a home ISP in the target country, not a data center. IP rotation at scale costs attackers very little.

Rate limiting catches bots that make high volumes of requests from a single source. Distributed bot networks solve this by spreading requests across thousands of IPs, each operating below rate thresholds.

User-agent filtering blocks requests with bot-identifying user-agent strings. Any bot author who wants to evade this sets the user-agent to Chrome on Windows. Takes about 30 seconds to implement.

Each of these techniques has a place in a defense stack, but none is sufficient alone. And critically, all of them operate at the network or HTTP layer, before the request reaches application code. A sufficiently sophisticated bot that clears perimeter controls will reach your application logic. And once it does, none of those defenses can help.

Bot Detection Techniques: From Signature to Runtime

Effective bot detection is layered. Each technique addresses a different class of attacker. Using only the lower layers leaves you exposed to bots that have evolved past them.

Layer 1: Signature-Based Detection

Signature-based detection is the simplest layer: maintaining a database of known bot identifiers and blocking or flagging requests that match.

The primary signals are:

- User-agent strings: Bots often use recognizable strings (“python-requests/2.31”, “Go-http-client/1.1”, “Scrapy/2.11”). A maintained list of known scrapers, headless browsers, and automated tools provides quick wins against unsophisticated operators.

- IP reputation: IPs associated with known data centers, Tor exit nodes, open proxies, and threat intelligence feeds. ByteHide Monitor aggregates 600M+ known malicious IPs across 7 threat intelligence lists.

- Referrer and header anomalies: Absent or malformed referrer headers, missing accept-language, out-of-spec header ordering.

Against simple bots, signature detection stops a significant volume of traffic with minimal computational overhead. The limitation is obvious: any attacker who spends five minutes reviewing your blocking rules can change their signatures to match legitimate traffic.

Here’s a basic configuration for bot blocking using ByteHide Monitor in a .NET application:

// Program.cs (ASP.NET Core)

using ByteHide.Monitor;

var builder = WebApplication.CreateBuilder(args);

builder.Services.AddByteHideMonitor(options =>

{

options.ApiKey = Environment.GetEnvironmentVariable("BYTEHIDE_API_KEY");

// Signature-based bot blocking: 390+ user agents across 21 categories

options.Firewall.BotBlocking.Enabled = true;

options.Firewall.BotBlocking.Categories = BotCategories.All;

options.Firewall.BotBlocking.Action = BlockAction.BlockRequest;

// IP threat intelligence: 600M+ known malicious IPs

options.Firewall.ThreatIntelligence.Enabled = true;

options.Firewall.ThreatIntelligence.Action = BlockAction.BlockRequest;

});

var app = builder.Build();

app.UseByteHideMonitor();

app.MapGet("/api/products", () => { /* your handler */ });

app.Run();The equivalent in Node.js:

// app.js (Express)

const express = require('express');

const { ByteHideMonitor } = require('@bytehide/monitor');

const app = express();

ByteHideMonitor.init({

apiKey: process.env.BYTEHIDE_API_KEY,

firewall: {

botBlocking: {

enabled: true,

categories: 'all', // 390+ signatures, 21 categories

action: 'block'

},

threatIntelligence: {

enabled: true,

lists: 'all', // 7 threat intel lists, 600M+ IPs

action: 'block'

}

}

});

app.use(ByteHideMonitor.middleware());

app.get('/api/products', (req, res) => { /* your handler */ });

app.listen(3000);

Signature-based detection handles the low-effort attack surface. Move to the next layer for anything more determined.

Layer 2: Behavioral Analysis

Behavioral analysis examines how a client interacts with your application, not just who it claims to be. Humans exhibit recognizable, irregular patterns. Bots don’t.

Signals that distinguish human from bot behavior:

- Mouse movement and cursor path: Human cursors follow slightly erratic arcs, pause, accelerate. Bots often move in straight lines or don’t move at all.

- Keystroke timing and dynamics: The rhythm between keystrokes is distinctive per human. Bots typically inject text instantly or at artificially regular intervals.

- Scroll behavior: Humans scroll in response to content. Bots often jump directly to target elements without any scrolling.

- Session flow: Human users browse: homepage, navigation, product pages, back. Bots go straight to the endpoint they want.

- Time-on-page distribution: Human session durations follow natural statistical distributions. Bot sessions cluster at the minimum viable time.

- Form interaction: Humans tab through fields, make typos, backspace. Bots fill forms atomically.

Behavioral analysis is powerful but not infallible. Headless browser frameworks like Playwright and Puppeteer can simulate mouse movement and keyboard events. High-end bot operators run real browsers on real devices to generate entirely authentic behavioral telemetry. At the hardware and browser level, the data is genuinely indistinguishable from a human.

Layer 3: Device Fingerprinting

Device fingerprinting generates a persistent identifier for a client based on the characteristics of their browser or device, without requiring a cookie or login.

Browser fingerprinting aggregates signals including:

- Canvas rendering output (browsers render the same operations slightly differently per GPU and driver)

- WebGL renderer and vendor strings

- Installed fonts and their measurements

- Screen resolution and color depth

- Timezone and language settings

- Browser plugin enumeration

- Audio context characteristics

- HTTP/TLS fingerprint (the specific cipher suites, extensions, and their order in a TLS ClientHello, known as JA3)

A complete fingerprint is reasonably stable for a given device and browser combination, even across sessions. This lets you flag a device previously associated with abusive behavior, regardless of IP rotation.

The limitation: headless browsers have well-known fingerprinting evasion. Tools like FingerprintJS Anti-Detection and Puppeteer Extra’s stealth plugin spoof canvas, WebGL, and other signals. TLS fingerprinting is similarly bypassable with enough effort. Fingerprinting is a strong signal when combined with other layers, not a standalone defense.

Layer 4: Machine Learning and Anomaly Detection

ML-based bot detection moves beyond individual signals to model the overall pattern of a session or request sequence.

Where earlier layers evaluate individual requests in isolation, ML models evaluate behavior across a session or over time: velocity patterns, API call sequences, access patterns across endpoints, the statistical shape of request timing. A human browsing an e-commerce site accesses a predictable sequence of endpoints in a predictable order with predictable delays. A credential-stuffing bot hits the login endpoint repeatedly with minimal variation between attempts.

Supervised models train on labeled datasets of human and bot traffic. Unsupervised models detect anomalies by identifying traffic that deviates significantly from established behavioral baselines.

The practical advantage: ML-based detection can identify zero-day bot behavior (traffic from operators who haven’t been seen before) without requiring a known signature. It adapts to new patterns with retraining.

The practical disadvantage: training effective models requires significant data volume and continuous updates. False positive rates need careful tuning; aggressive models flag legitimate users, which is a real cost. ML detection is probabilistic, producing confidence scores rather than binary decisions. You have to decide what threshold justifies a block versus a challenge.

Layer 5: In-App Runtime Detection

The previous four layers share a common characteristic: they all operate at the perimeter of your application. They examine incoming requests before those requests reach your code. That’s the right place to stop most bot traffic.

But sophisticated bots that clear perimeter controls will reach application logic. And when they do, they execute the attack directly against your database queries, your command execution, your template rendering.

In-app runtime detection works differently. Rather than inspecting requests at the boundary, it monitors execution inside the application, intercepting operations at the exact point where a bot payload would cause damage.

Take a SQL injection from a credential-stuffing bot. The request arrives at your application. Perimeter detection examines the IP, headers, and payload patterns. If it clears those checks, the request reaches your ORM or database adapter. Runtime detection intercepts there, examining the actual SQL statement about to execute, not a pattern in an HTTP parameter. It sees the fully-assembled query, including whatever the bot injected, at the moment before it runs.

Two advantages follow from this that no perimeter control can match:

Precision without false positives. Perimeter WAFs make probabilistic decisions based on pattern matching against request parameters. They can’t see whether a suspicious-looking parameter actually reaches a vulnerable code path. Runtime detection sees exactly what’s happening, at the exact execution point, with full application context. A payload that looks suspicious in a URL might be benign in context. A payload that looks benign at the HTTP layer might be catastrophic in the SQL it produces.

Forensic completeness. When a perimeter WAF blocks a request, you get: source IP, user agent, blocked URL. When runtime detection triggers, you get the line of code, the method, the exact payload as it arrived at the execution point, the confidence level, and the attack classification. That information feeds directly into application security monitoring and, if you’re using a platform with SAST integration, into vulnerability prioritization.

In-app runtime detection also covers attack surfaces that perimeter controls can’t reach: API endpoints that accept complex objects, deserialization paths, command execution triggered by application logic rather than raw request parameters.

Here’s how Monitor’s runtime detection intercepts a SQL injection from bot-driven credential stuffing in .NET:

// No code changes required in your data access layer.

// Monitor instruments at the EF Core/ADO.NET execution layer automatically.

[HttpPost("/api/auth/login")]

public async Task<IActionResult> Login([FromBody] LoginRequest request)

{

// If the assembled query contains injection patterns (e.g. OR 1=1--),

// Monitor blocks it before it reaches the database and logs the full incident.

var user = await _db.Users

.Where(u => u.Email == request.Email && u.Password == request.Password)

.FirstOrDefaultAsync();

return user != null ? Ok(new { token = GenerateToken(user) }) : Unauthorized();

}The same interception for a Node.js + MongoDB API being probed by a scraping bot:

// Express + Mongoose

// Monitor intercepts at the Mongoose execution layer — no query changes needed.

app.post('/api/search', async (req, res) => {

// Bot payload: { "query": { "$where": "this.price < 1000" } }

// Monitor detects the $where NoSQL injection before execution and blocks it.

const results = await Product.find({ name: req.body.query }); // intercepted

res.json(results);

});In my experience, teams that invest heavily in CAPTCHA flows and IP blocklists often discover those controls are bypassed within weeks by bots using residential proxies and real browser fingerprints. The runtime layer catches what perimeter controls miss because it’s positioned where the attack actually lands.

Bot Detection Across Platforms

Most bot detection literature focuses exclusively on web applications. Bots target every execution context where automated interaction produces value for an attacker, and the detection requirements differ significantly per platform.

Web Applications

All five layers are applicable. Behavioral analysis and device fingerprinting are particularly relevant because the browser context provides rich signals: DOM interaction events, JavaScript execution timing, WebGL rendering. Start here before expanding to other platforms.

APIs

APIs are the most exposed surface and the least protected. No UI means no CAPTCHA, no behavioral signals from mouse or keyboard, and typically no session management that provides anomaly context. Rate limiting and IP reputation are commonly deployed, but both fail against distributed bot networks operating below thresholds.

Runtime detection is the most reliable layer for APIs. When bots target API endpoints, they probe for injection vectors in request parameters, abuse logic with unexpected input combinations, or extract data by iterating over predictable resource identifiers. All of these attack patterns are visible at the execution layer, not the HTTP layer.

// Protecting a sensitive API endpoint with Monitor

app.get('/api/users/:id', async (req, res) => {

// Monitor detects: path traversal variants, velocity anomalies,

// SSRF patterns if the ID resolves to internal resources.

const user = await User.findById(req.params.id); // intercepted

res.json(user);

});Mobile Applications

Mobile apps face a distinct class of bot threat: automated interaction via emulators. Attackers run hundreds of Android or iOS emulator instances to automate account creation, bonus farming, or testing stolen credentials.

Runtime protection at the mobile layer detects the execution environment itself. Monitor’s mobile detection identifies:

- Emulators: Android Studio Emulator, Genymotion, Bluestacks, MEmu, NoxPlayer, LDPlayer, and Xcode Simulator

- Rooted/jailbroken devices: Magisk, SuperSU, Cydia, unc0ver — environments where standard security controls can be bypassed at the OS level

- Hooking frameworks: Frida and Xposed injection, used by automated testing bots to intercept and manipulate app logic at runtime

- Debugging environments: Active debugger attachment, which indicates instrumented automated execution rather than genuine user interaction

Desktop Applications

Desktop applications face automation bots using GUI scripting tools (AutoHotkey, PyAutoGUI, Sikuli) or process injection to interact with application logic programmatically. Runtime protection detects process injection, debugger attachment, and memory inspection tools that indicate automated rather than human interaction.

Detecting AI-Powered and Agentic Bots

The emerging class of bot threat is fundamentally different from what preceded it. Agentic bots use large language models to understand context, adapt strategy in response to defenses, and generate behavior that is contextually indistinguishable from human intent.

Where a traditional credential-stuffing bot executes a fixed loop, an AI-powered bot can read a login page, understand that it presents a multi-step authentication flow, reason about what fields to populate and in what order, and adjust when it encounters an unexpected state. CAPTCHA-solving via LLM-assisted reasoning is already a documented capability.

The detection challenge: signature-based and simple behavioral approaches fail against adaptive bots because there’s no fixed pattern to match. Each interaction is dynamically generated.

What does work:

Sequence-level anomaly detection. Individual requests look human. Sequences of requests reveal intent. An AI agent navigating an e-commerce site to perform price arbitrage will access product categories, individual products, and price comparison flows in patterns that, aggregated over time, deviate from genuine shopping behavior.

LLM prompt injection detection. If your application processes natural language input that influences AI-driven logic (chatbots, AI assistants, AI-mediated search), prompt injection is a specific bot-adjacent attack surface. Monitor includes detection for prompt injection patterns: jailbreak prompts, role manipulation, delimiter injection, and encoded payloads. This is currently the only RASP-level control for this attack surface.

Rate analysis at the action level. AI agents are fast, but not human-fast in specific ways. They tend to be uniformly fast across actions that humans would find cognitively demanding, and uniformly accurate in ways that humans aren’t: zero typos, zero hesitation on form fields.

The distinction between malicious AI agents and legitimate ones (search engine crawlers, AI content indexers) is becoming a meaningful security concern. Legitimate agentic traffic from services like OpenAI’s web crawler or Perplexity’s indexer will grow. Distinguishing authorized from unauthorized automated agents requires explicit allow-listing of known agent signatures combined with runtime context to verify behavior matches stated purpose.

Perimeter Detection vs. In-App Detection

| Criterion | Perimeter (WAF, CDN, Proxy) | In-App Runtime (RASP) |

|---|---|---|

| Where it operates | Network/HTTP boundary, before application code | Inside application code, at execution point |

| What it inspects | Request headers, IP, URL patterns, payload strings | Assembled queries, commands, operations before execution |

| Precision | Pattern-matching against request parameters | Sees exact code impact: which function, which line, what payload |

| False positive rate | Higher (no application context) | Lower (knows whether vulnerable code is actually reached) |

| Infrastructure dependency | Requires DNS/proxy/CDN control | SDK integration; no infrastructure changes |

| Mobile and desktop coverage | Web traffic only | Web, mobile, desktop, IoT — same SDK |

| Forensic detail | Source IP, request URL, blocked payload | Code line, method name, assembled payload, confidence score, attack classification |

| Handles perimeter bypass | No | Yes — designed for bots that clear perimeter controls |

| SAST integration | None | Feeds runtime exploitation data back to static analysis |

| Platforms covered | Web only | Web, mobile (Android/iOS), desktop (.NET), IoT |

| Configuration | DNS/load balancer access required | SDK initialization + dashboard |

How to Implement Bot Detection in Your Application

A practical implementation sequence, ordered by impact and deployment effort:

Step 1: Identify your attack surface. Where does bot activity cause real harm? Login endpoints (credential stuffing), search and product endpoints (scraping), checkout flows (carding), API endpoints (data extraction). Prioritize protection where the damage is highest, not where it’s easiest to add a control.

Step 2: Deploy signature and IP reputation controls first. This handles the unsophisticated majority of bot traffic with minimal overhead. Block known bot user agents, data center IP ranges, and threat intelligence feeds.

Step 3: Add behavioral analysis for high-value endpoints. Instrument mouse events, keystroke timing, and session flow on your most sensitive flows: login, registration, checkout. This catches bots that generate real HTTP traffic but no human browser interaction.

Step 4: Integrate runtime detection for data access and command execution. For API endpoints, database query paths, and code that executes commands based on user input, add in-app runtime detection. This closes the gap perimeter controls leave open for sophisticated bots with legitimate-looking network signatures.

Step 5: Define response actions per context. Not every detected bot warrants a hard block. A graduated response approach works better for ambiguous signals:

builder.Services.AddByteHideMonitor(options =>

{

options.ApiKey = Environment.GetEnvironmentVariable("BYTEHIDE_API_KEY");

// High-confidence signatures: block immediately

options.Firewall.BotBlocking.Enabled = true;

options.Firewall.BotBlocking.Action = BlockAction.BlockRequest;

// SQL injection at runtime: block and alert

options.Detections.SqlInjection.Action = DetectionAction.BlockAndNotify;

options.Detections.SqlInjection.Notification = new SlackNotification

{

WebhookUrl = Environment.GetEnvironmentVariable("SLACK_WEBHOOK"),

Message = "SQL injection blocked: {payload} from {ip}"

};

// Ambiguous signals: log for analysis, don't block yet

options.Detections.NoSqlInjection.Action = DetectionAction.LogOnly;

// Return a generic error — don't reveal detection logic to attackers

options.Firewall.BotBlocking.CustomResponse = new CustomResponse

{

StatusCode = 429,

Body = "{ \\"error\\": \\"Rate limit exceeded\\" }"

};

});Step 6: Monitor and iterate. Bot operators adapt. Review blocked traffic logs regularly to identify new evasion patterns. Update signature databases. Adjust ML model thresholds based on false positive rates in production. The stack that works today won’t be sufficient in a year without maintenance.

Conclusion

Bot detection isn’t a single technology decision. It’s a layered strategy where each layer addresses the evasion capabilities of a specific class of attacker.

Signature-based controls handle the unsophisticated majority. Behavioral and fingerprinting layers catch automated browsers that try to blend in. ML-based anomaly detection identifies novel patterns without prior signatures. And runtime detection closes the final gap: the sophisticated bot that clears every perimeter control and reaches your application logic.

The trend worth watching over the next few years is agentic bots that adapt in real time using LLMs. Static rules and fixed behavioral thresholds will degrade in effectiveness as agents get better at simulating contextually appropriate behavior. The defenses that remain durable are those positioned at the execution layer, where behavioral simulation becomes irrelevant: only what the bot actually does when it reaches your code.

If you’re looking to add runtime in-app bot detection without infrastructure changes, ByteHide Monitor deploys as an SDK, covers web, mobile, and desktop contexts from the same integration, and includes application security monitoring with full incident forensics.

FAQ

What are the main bot detection techniques?

Bot detection techniques range from signature-based detection (matching known bot user agents and malicious IP addresses) to behavioral analysis (examining mouse movement, keystroke patterns, and session flow), device fingerprinting (generating unique client identifiers from browser and hardware characteristics), machine learning anomaly detection (identifying traffic patterns that deviate from human baselines), and runtime in-app detection (intercepting attacks at the code execution point inside the application). Effective bot detection combines multiple layers, since each technique has specific evasion weaknesses that the next layer addresses.

How do you detect bots without using a CAPTCHA?

CAPTCHA-free bot detection relies on passive signals that don’t require user interaction: IP reputation checks against threat intelligence databases, behavioral analysis of mouse movement and session flow, device fingerprinting via browser characteristics, HTTP and TLS fingerprint analysis (JA3), and runtime monitoring of application-layer execution patterns. These passive techniques are generally preferable to CAPTCHAs because they don’t degrade user experience and are harder for attackers to target directly.

What is in-app bot detection and how is it different from WAF bot protection?

In-app bot detection (also called runtime bot detection) operates inside the application at the code execution layer, intercepting database queries, command execution, and API calls at the moment they’re about to execute. A traditional WAF operates at the network perimeter, inspecting HTTP requests before they reach application code. The key difference: a WAF makes decisions based on request patterns without knowing whether those patterns actually reach a vulnerable code path. In-app detection sees exactly which function is executing, what the assembled payload looks like, and what the execution context is, producing fewer false positives and more complete forensic data.

How do you block bots on mobile apps?

Mobile bot detection requires runtime protection at the execution environment level: detecting automated emulators (Android Studio Emulator, Genymotion, Bluestacks), identifying rooted or jailbroken devices that can bypass standard controls, detecting hooking frameworks like Frida and Xposed that bots use to instrument app logic, and identifying debugger attachment that indicates automated execution. Traditional perimeter controls like IP blocking and CAPTCHA can be applied to the API endpoints mobile apps consume, but environment-level detection is necessary to catch bots that operate inside the application via emulators.

Can AI-powered bots evade detection?

AI-powered bots can evade signature-based detection, behavioral rules with fixed thresholds, and CAPTCHA challenges. What they cannot easily evade is execution-layer detection: regardless of how convincingly a bot simulates human behavior at the request level, when it executes a SQL injection, the assembled query at the database adapter layer reveals the attack pattern. Runtime detection operates after the bot has cleared all perimeter controls, at the point where the payload actually executes, making behavioral simulation irrelevant to detection accuracy.